This Chip Will Turn Your Phone Into a Supercomputer

IBM's "brain chip" could help computers surpass the human mind.

Credit:

Credit:

Recommendations are independently chosen by Reviewed's editors. Purchases made through the links below may earn us and our publishing partners a commission.

It may sound crazy, given how powerful they've become, but computers are still pretty stupid—at least when compared to the human brain.

Your mind contains roughly 100 billion neurons, which work together to create about 100 trillion connections, or "synapses." Your smartphone, meanwhile, only has a paltry one billion transistors.

Neurons and transistors aren't precisely equivalent, but that fact only tips the scales further in the brain's favor. That's because where transistors are fundamentally limited to simple binary yes/no answers, neurons enable your brain to make logical leaps.

Think about it this way: Yes, your phone can calculate large equations impossible for an unassisted mind, but how good is Siri at holding a simple conversation? When it comes to intuition, the brain still comes out on top.

But for how long?

Researchers at IBM are currently working on a new kind of computer chip that mimics the functions of the human brain. In other words, it’s able to recognize patterns and, in a sense, “learn.”

IBM aims to merge the "left-brain" behavior of traditional processors with the "right-brain" activity of the TrueNorth chip to create what it calls "holistic computing intelligence."

Unlike traditional processors, which operate in a linear fashion, the "TrueNorth" chip is biologically inspired. It features a vast network of “synaptic” transistors that can respond to the activity of others in the network and draw patterns out of seemingly random data. In effect, this is what the brain does. There are the neurons (transistors), which conduct electrical activity via axons, which themselves unite to create synapses (memory).

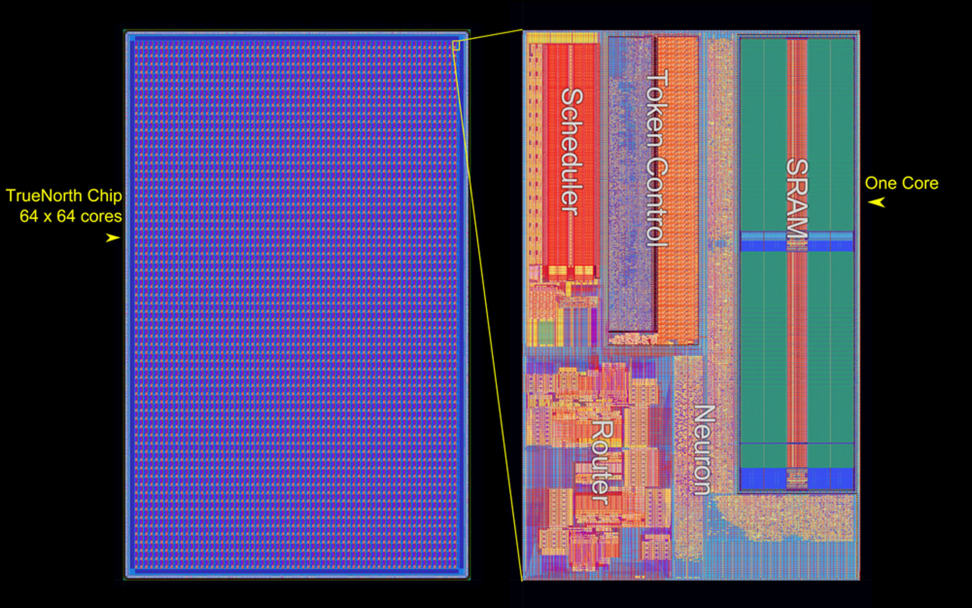

The TrueNorth chip, about the size of a postage stamp, contains 5.4 billion transistors within a network of more than 4,000 “neurosynaptic cores.” This is the equivalent of 256 million programmable synapses.

While that’s far short of the 100 trillion synapses found in the human brain, the chip’s architecture is unprecedented. Just like the human brain, it's event-driven, meaning its transistors fire only when needed. This results in cooler operating conditions and much lower energy use. Traditional processors, on the other hand, run all the time.

Speaking to The Register, IBM fellow Dr. Dharmendra Modha explained the differences between traditional processors and TrueNorth:

Today's computers—for example, ARM cores—separate memory from computation via a bus. This requires a lot of energy and bandwidth to move data to-and-from the memory. Additionally, the processor is sequential, synchronous, and fault-prone. In contrast, TrueNorth architecture is a network of neurosynaptic cores. Each neurosynaptic core tightly integrates memory and computation thus significantly reducing energy and bandwidth challenges. All cores are distributed and operate in parallel. Each core computes in an event-driven fashion and different cores communicate in an event-driven fashion.

Altogether, the chip’s design could eventually make your smartphone as powerful as a supercomputer.

“The architecture can solve a wide class of problems from vision, audition, and multi-sensory fusion, and has the potential to revolutionize the computer industry by integrating brain-like capability into devices where computation is constrained by power and speed,” Modha added.

Computing power is rapidly nearing a fundamental roadblock: the atom. Within the past 60 years, transistors have shrunk from the size of a human thumb to the size of a large molecule. Pretty soon, they’ll be the size of an atom. At that point, computing power will reach a natural limit.

But by rethinking chip architecture, researchers are circumventing this nano-sized roadblock. At the very least, IBM’s neurosynaptic chips will hold us over until quantum computing arrives. But that may still be a long way off.